Previous Article

News

Artificial Intelligence (AI) and Probation Services by Mike Nellis

It is no longer in science fiction alone that AI (“artificial intelligence”) figures in futuristic forms of crime control, but its real-world implications – least of all for probation services – are far from clear. This is surprising, because while AI is not exactly new, the precise technologies grouped under this loose and ambiguous rubric – driven by commercial investment and political expediency – continually increase in reach and power, surpassing what most laypeople can imagine might happen, while state and corporate actors relentlessly envision and enact new uses of it. AI’s impact on probation services may be minimal now, and its trajectory is genuinely hard to discern, but some probation services have already appointed AI specialists to their senior management teams, and there is a debate to be had about what is coming next.

Artificial Intelligence (AI) and Probation Services – Mike Nellis

Everybody knows, surely, that the emergence of AI has major implications for employment in all the so-called human service professions – reconfiguration of some kind, and increased efficiency (yes, again!) is part of what the energising, but complacent, narrative of the “fourth industrial revolution” promises, obliterating the losses that may be incurred en route. Probation services will not be immune from this. In Europe they have arguably been slow to acknowledge the occupational implications of AI and, if they are paying attention at all, too accommodating of talk about its inevitability, necessity and managerial “potential”. Probation’s relative silence on its likely future shape under “surveillance capitalism” (a more critical counter-narrative to that of the “fourth industrial revolution”, but by no means the final word) must end if wise decisions, viable tactics and strategic alliances are to be made and developed in respect of AI.

Electronic monitoring (EM) may be the template through which probation services are appraising the potential impact of AI as, in Europe, that has by and large been effectively constrained (so far) by the ethos and culture of probation. But that would be a mistake: AI has far more comprehensive implications for probation services than EM, not least in the size, structure and skillset of the workforce, as well as the transformation of supervisory practices themselves. That said, the steady adoption of smartphones and laptops to aid supervision, sometimes framed as a “further development” of EM, accelerated by the Covid pandemic’s constraints on face-to-face working, are a possible stepping stone towards the use of AI. In the US, the National Institute for Justice had commissioned action research on AI and smartphone-based EM before the pandemic, indicating, if nothing else, what thought leaders in corrections are thinking. Compared to humanistic forms of probation, even compared to electronic location monitoring, voice, text and camera-based smartphone supervision can generate vastly greater amounts of data about offenders’ lifestyles, dispositions and behaviour, without ever fully recognising them as “persons”.

Data is what AI systems train on, process and infer from, with a view to predicting what’s next (imminent or eventual) at varying levels of granularity – individual, institutional, corporate, electoral, demographic, societal – even genetic, molecular and viral if AI is being used to predict disease and pandemic trajectories. Predictivity – of consumer choices and market development – is the business model of “surveillance capitalism”, but has already been infused into some aspects of policing – the prediction of individual or hot-spot offending – with mixed and ambivalent success, and is finding a place in other public services, predicting various aspects of client behaviour. Probation is no stranger to predictive technologies – risk management is mathematically premised on it – but the “online decision support systems”, rudimentary forms of AI already becoming available to probation services, with the potential to become much more if probation supplies them with more data – draw on much larger data streams, and normalise prediction (of compliance, need, risk and reoffending) as a feature of everyday practice in supervision. The specific ethics of predictive policing are already fraught – what should be done to pre-empt imminently predicted reoffending, immediate arrest or compassionate support? – but in a world where AI is becoming ubiquitous the ethics of predictivity itself are becoming harder to question.

Since 2014, major European institutions have become preoccupied with AI, believing it essential to economic prosperity and political security, aspiring to keep abreast of developments in China (where there are no state constraints on mass data collection, or on surveillance) and the USA (where the agenda is dominated by the interests and expertise of major tech companies). Europe, however, wants all uses of AI within its boundaries to be compliant with human rights, democratic processes and the rule of law. Reconciling human rights and the forms of human life in which they have hitherto been grounded with the intrinsically inhuman, machine-based character of AI is a taller order than it first appears. A range of European Commission and Council of Europe committees, sub-committees and working parties have nonetheless begun serious work on this. The implications of AI for policing and judicial decision-making were explored early, and in 2021 the Council of Europe’s PC-CP (Penological Affairs Committee) began developing an ethical recommendation for the use of AI in both prisons and probation services, and in private companies which develop and deliver services on their behalf.

European institutions are realistically taking for granted that AI will grow in significance and affect everything. The danger here is uncritically accepting that those who devise and champion AI already have the power to make it happen, infuse it everywhere and overcome all resistance. But AI is not a natural, inexorable, evolutionary development – the forms, scale and pace of its deployment must always be contested, which requires greater “AI literacy” among those who might need to resist its encroachment. There is indeed technological ingenuity at AI’s root, but its capabilities and uses are politically and commercially driven, serving some interests and not others. AI’s rewards will, for certain, be unevenly distributed – unlike earlier waves of automation the jobs lost this time may have no replacement. Crucially, AI – because of the resources needed to build and administer it – will increase the power of the already powerful. There are many reasons to think that it will deepen social inequality and few reasons to think that it will extend or strengthen democracy. There are many ingrained social injustices – as probation services are all too aware – to which AI is manifestly not a solution, which it may become harder to talk about and act against. It is not a question of whether AI could be put to good uses – it could – but a question of whether it will, and who decides.

* Mike Nellis is Emeritus Professor of Criminal and Community Justice, University of Strathclyde, honorary member of CEP and one of two advisers to the current PC-CP work on AI in prisons and probation .

Related News

Keep up to date with the latest developments, stories, and updates on probation from across Europe and beyond. Find relevant news and insights shaping the field today.

New

Probation in Europe

New Vodcast Episode: Anke Spoorendonk on the Role of Probation in Justice Policy

21/05/2026

The 20th episode of Division_Y features Anke Spoorendonk, former State Minister of Justice in Schleswig-Holstein, Germany, representing the Danish minority party SSW.

New

Mental Health

CEP publishes European Mental Health Training Curriculum for Probation Staff and launches Pilot Implementation Initiative

19/05/2026

In this article, you can explore the newly published European Mental Health Training Curriculum for Probation Officers, learn about the call for a national pilot implementation, and find details about the upcoming webinar on 21 May presenting the curriculum modules.

New

Mental Health

European Mental Health Week: strengthening probation practice through mental health

13/05/2026

This week, during Mental Health Awareness Week, the Confederation of European Probation is highlighting the importance of mental health in probation practice across Europe.

New

Probation in Europe, Research

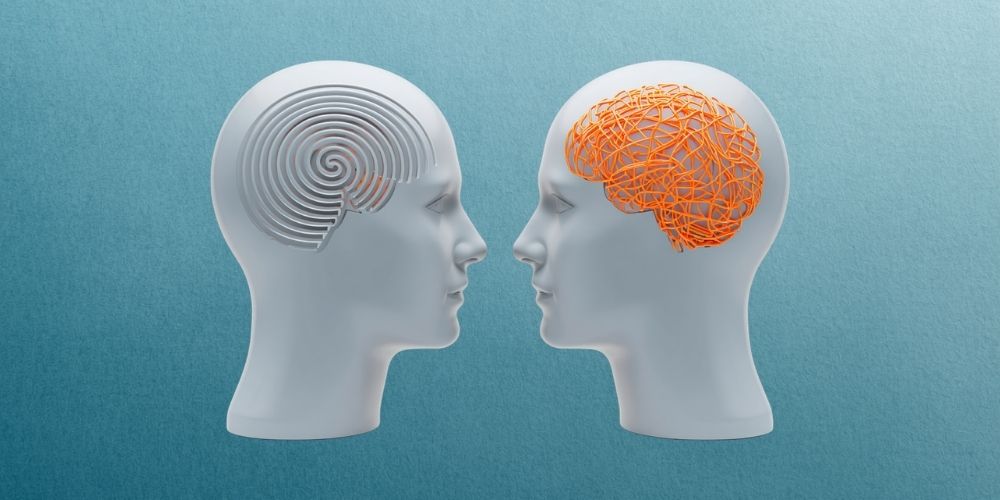

Free Research Resource: KrimDok

12/05/2026

Looking for reliable criminological literature? KrimDok is a free online database developed by the University of Tübingen and supported by the German Research Foundation (DFG).

The database contains nearly 400,000 references to books, journal articles, reports, and other publications covering criminology and related fields such as criminal justice, psychology, sociology, education, and law. It draws on a specialist criminology library established in 1969, with a collection of around 150,000 titles, and includes indexed articles from more than 200 academic journals.

Reading corner

Violent Extremism

New newsletter available: EU Knowledge Hub on Prevention of Radicalisation

11/05/2026

The latest edition of the EU Knowledge Hub newsletter brings together policy, research, and practice to address evolving radicalisation threats across Europe.

New

Gender-based violence

New European Master’s Programme on Perpetrator Intervention Launched

07/05/2026

The European Network for the Work with Perpetrators of Domestic Violence (WWP EN), in collaboration with Blanquerna – Universitat Ramon Llull (Barcelona), has launched a pioneering new programme:

Lifelong Learning Master’s Degree in Intervention Strategies with Perpetrators of Gender-Based Violence: Social, Clinical, and Legal Perspectives

This initiative represents the first international lifelong learning Master’s programme specifically focused on perpetrator intervention, offering a unique opportunity for professionals working to address and prevent gender-based violence across Europe and beyond.

Subscribe to our bi-monthly email newsletter!

"*" indicates required fields

- Keep up to date with important probation developments and insights.